The New York Times publish a us-states.csv file with Covid cases and deaths by state over time. (I believe size here is measured in kilobytes: the GitHub API documentation isn’t clear on this point.) Joining across different filesĪll of these examples have worked with JSON data piped into the tool-but you can also pass one or more files, of different formats, in a way that lets you execute joins against them.Īs an example, let’s combine two sources of data. Select sum(size), sum(stargazers_count) from stdin We can run aggregate queries too-let’s add up the total size and total number of stars across all of those repositories: $ curl -s '' \ If I wanted to generate a LaTeX table of the top repos by stars I could do this: $ curl -s '' \

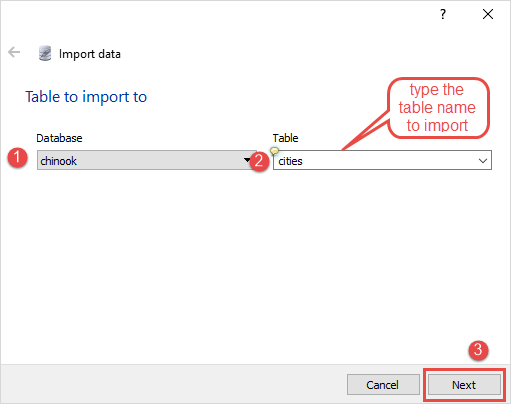

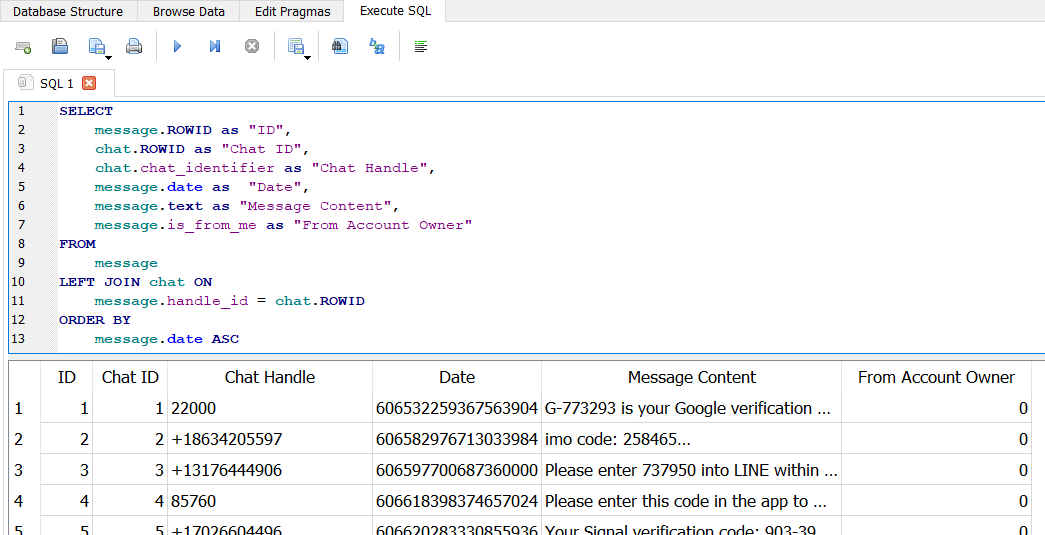

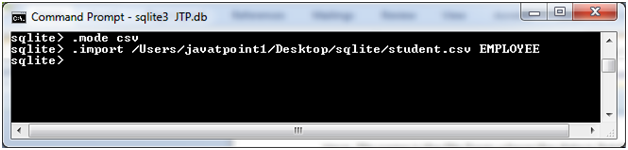

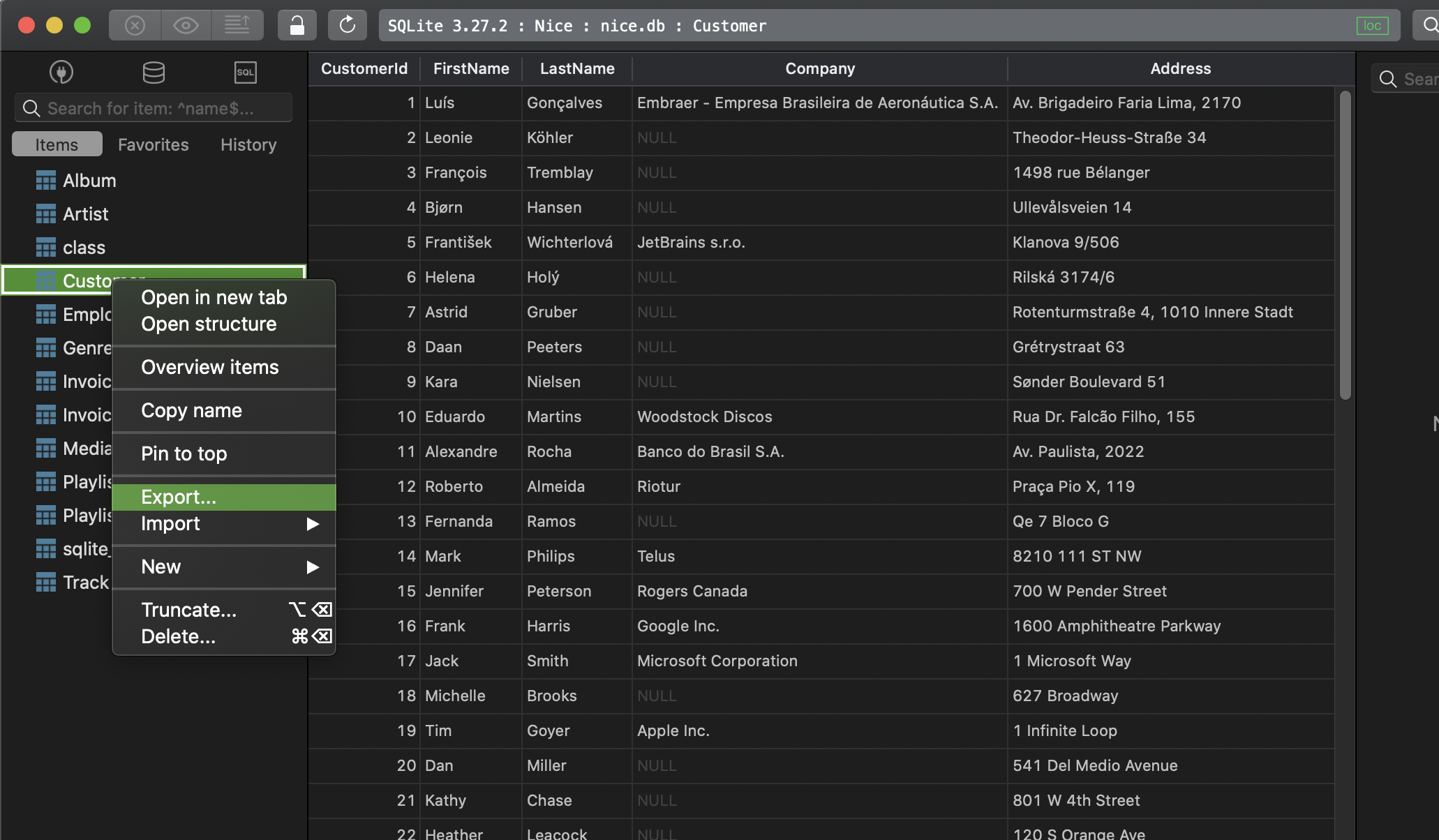

The -t option supports a number of different formats, specified using -fmt. Or we can use -csv to get back CSV: $ curl -s '' \ The -t option here means “output as a formatted table”-without that option we get JSON: $ curl -s '' \ The query selects three of the JSON properties, renames stargazers_count to stars, sorts by stars and return the first three. Stdin is the temporary table created for the data piped in to the tool. Select full_name, forks_count, stargazers_count as stars GitHub make those available via an authentication-optional API endpoint at -which returns JSON that looks like this (simplified): My Dogsheep GitHub organization has a number of repositories. It can import data, execute SQL and output the result in a one-liner, without needing any temporary database files along the way. The new sqlite-utils memory command ( full documentation here) operates against a temporary, in-memory SQLite database. This week I realized that I had most of the pieces in place to reduce this to a single step. Using SQL to re-shape data is really useful-since sqlite-utils can output in multiple different formats, I frequently find myself loading in a CSV file and exporting it back out as JSON, or vice-versa. Processing data with this involves two steps: first import it into a temp.db file, then use sqlite-utils query to run queries and output the results. Sqlite-utils already offers a mechanism for importing CSV and JSON data into a SQLite database file, in the form of the sqlite-utils insert command. I’ve recorded this video demonstrating the new feature-with full accompanying notes below. You can install it using brew install sqlite-utils or pip install sqlite-utils. The new feature is part of sqlite-utils 3.10, which I released this morning. The new sqlite-utils memory command can import CSV and JSON data directly into an in-memory SQLite database, combine and query it using SQL and output the results as CSV, JSON or various other formats of plain text tables. In order to not have to CAST() each TEXT column whenever working with dates or numbers, the following schema.Joining CSV and JSON data with an in-memory SQLite database I kept thinking that there should be a simpler way: what if I use SQLite for that? In the first time I resorted to create a database on PostgreSQL and import the CSV file into it, but after that I never remember the CSV import syntax and it still requires a daemon running just for that. The problem is that q isn’t that straightforward to install, as apparently it is not available via apt nor pip, nor one is able to easily change the data once it’s imported, like in a regular database. When facing this issue, at first I thought about using harelba/q to query the CSV files directly in the command line.

While it’s true that most of the users of this report are used to deal with CSV files, be them developers or accountants experts in handling spreadsheets, this is definitely not the most user-friendly way of offering insights into billing data.

The only problem is that the generated report is a CSV file, shifting the responsibility of filtering and visualizing data to the user. Currently, the only way to get detailed information about it is via the Get usage report button in the project/organization billing page. GitHub offers a very superficial view of how GitHub Actions runners are spending their minutes on private repositories.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed